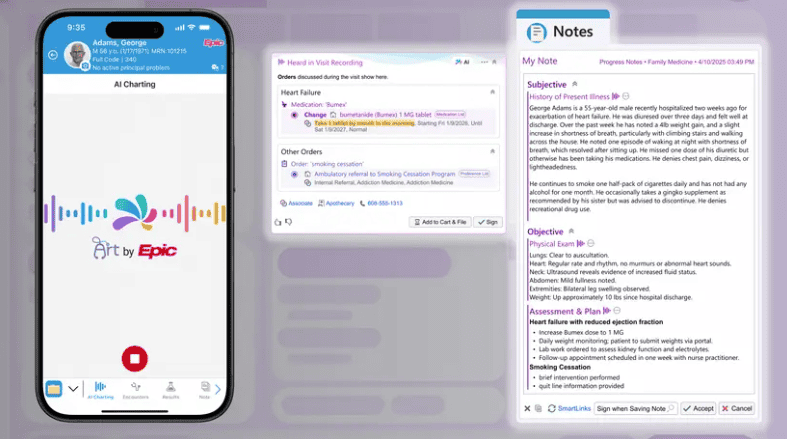

The wait is over. Epic’s scribe has arrived, and it’s packing a lot more than ambient notes.

“AI Charting” goes beyond transcriptions. The fully built-in feature not only listens during patient visits and drafts notes, it also queues up orders based on the conversation.

- The initial release allows clinicians to personalize the note structure using voice commands (Ex. asking to format the history of present illness as a bulleted list).

- Epic is positioning AI Charting as the killer app for its Art clinical copilot, which also has a pre-visit Insights tool that’s apparently already being used 16M times per month.

Distribution is king. Over 40% of U.S. hospitals are on Epic, and an AJMC study from just last week showed that two-thirds of those hospitals have already adopted ambient AI.

- AI Charting is breaking onto the scene through one of healthcare’s biggest distribution channels, and Epic has a ton of levers it can pull with pricing and bundling to start stealing share (DAX Copilot, Abridge, and ThinkAndor accounted for ~80% of Epic hospitals in the recent study).

- Rather than charging a per-user-per-month fee like most ambient AI platforms, STAT reports that Epic plans to have a separate license for AI Charting, with the price varying by org size and utilization to get the tool in as many hands as possible.

It’s time to differentiate. The race is on for established players to prove they can deliver value that Epic’s integrated approach can’t match.

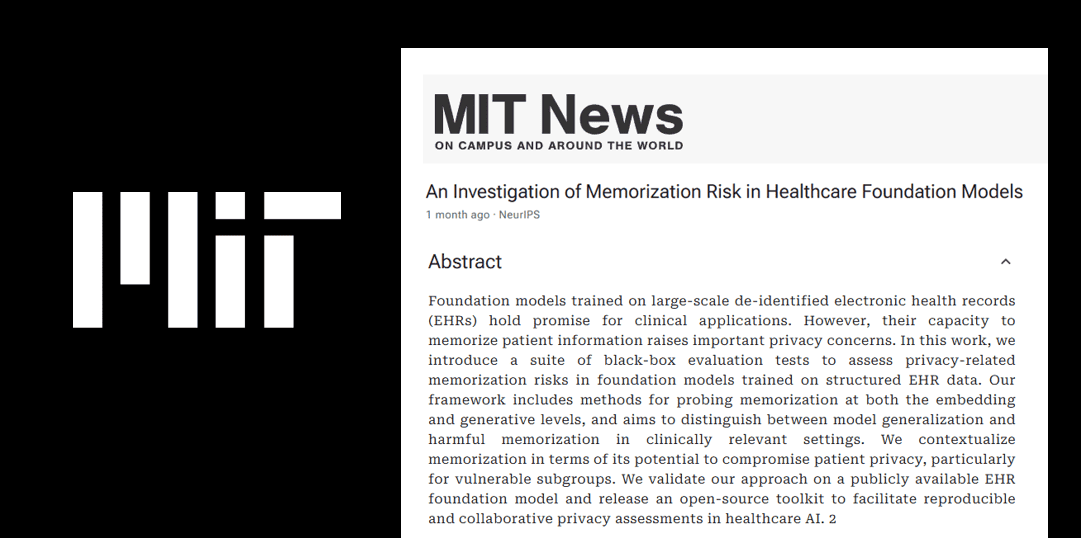

- That means tackling problems that are too messy for Epic to touch (Abridge bringing real-time prior auths to the point of conversation), or too specialized for it to get right with so many other plates spinning (Nabla raising the bar for AI safety with world models).

- Epic is working closely with Microsoft to get new features online quickly, but nailing multiple specialties in countless languages could still prove to be a job that’s better suited for a company with a dedicated focus.

- Epic might own the “operating system” almost as much as Microsoft owns Windows, but just because MS Paint exists doesn’t mean the world doesn’t need Adobe Photoshop.

The Takeaway

Ambient scribes proved how fast health systems would layer on their own AI if Epic couldn’t keep up, and we’ll now have to wait and see if the cost and experience of Epic’s scribe is enough to compete with the flock of ambient AI innovators dedicated to this problem.